AI has played a central role in multilingual content workflows for many years. Steady advances in Machine Translation over decades have driven consistent adoption across industries. In parallel, the industry has developed human methodologies and quality frameworks to assess linguistic soundness at scale.

What has changed is not whether AI is used, but how broadly and how critically it is applied.

Today’s large language models are tackling a far wider range of problems. We are not just translating with AI anymore. We are generating, adapting, transcreating, reviewing, and editing. The scope has expanded from language conversion to content transformation.

At the same time, AI-powered localization is no longer experimental. Two years ago, many teams were still exploring whether AI could support their translation workflows. Now AI is pervasive and often expected. Budget assumptions increasingly reflect the belief that AI will make everything faster and cheaper.

As AI takes on more complex, brand-sensitive, and high-impact tasks, evaluating its performance becomes significantly harder.

Many teams still struggle to answer a deceptively simple question: is our AI setup delivering the outcomes we expect?

That question comes up frequently in conversations with customers and partners, and it was the starting point for a recent guide we published on evaluating AI for multilingual content.

The challenge is rarely the technology itself. More often, evaluations generate data but not clarity. Teams run pilots, collect scores, compare outputs, and still walk away uncertain about what the results actually mean. Metrics fluctuate. Trade-offs remain implicit. Stakeholders interpret the same results differently. The outcome is not failure, but confusion.

In multilingual environments, where performance varies significantly by language, domain, and content type, that uncertainty compounds quickly.

Evaluating AI is not about asking whether it works. It is about asking whether it works well enough, often enough, for your business, your brand, and your customers. Is it genuinely breaking down language barriers? Does it create content that resonates? Or does it introduce subtle friction, misalignment, or risk that only becomes visible after deployment?

Why most AI evaluations fall short

Many AI evaluations generate metrics but not decisions. In many localization teams, quality has traditionally been the primary focus. But AI evaluation cannot stop there.

Turnaround times may look promising. Costs may appear lower. Quality scores may fluctuate. Meanwhile, senior stakeholders may be asking a different question entirely: is this fast enough and cheap enough to justify scaling?

Without clearly defined expectations across time, cost, and quality, evaluation outputs become noisy. One stakeholder prioritizes speed. Another prioritizes cost reduction. Another focuses on linguistic nuance or brand tone. The evaluation produces data, but no shared conclusion.

No evaluation methodology can provide every answer. Frameworks such as MQM bring structure and rigor, but they require expertise to interpret correctly and are not equally useful across all use cases. Automated metrics promise scale, yet often generate signals that are difficult to translate into confident decisions.

In an era where Machine Translation approaches or exceeds human quality in many scenarios, and where AI is applied far beyond translation alone, evaluation complexity increases rather than decreases. Without clarity of expectations, teams risk drowning in data while losing trust in the outputs.

One way to reduce the noise is to step back and unravel expectations. Start with clear goals. We recently published a guide on how to evaluate AI for multilingual content that encourages exactly this kind of back-to-basics approach.

The three dimensions that define AI value

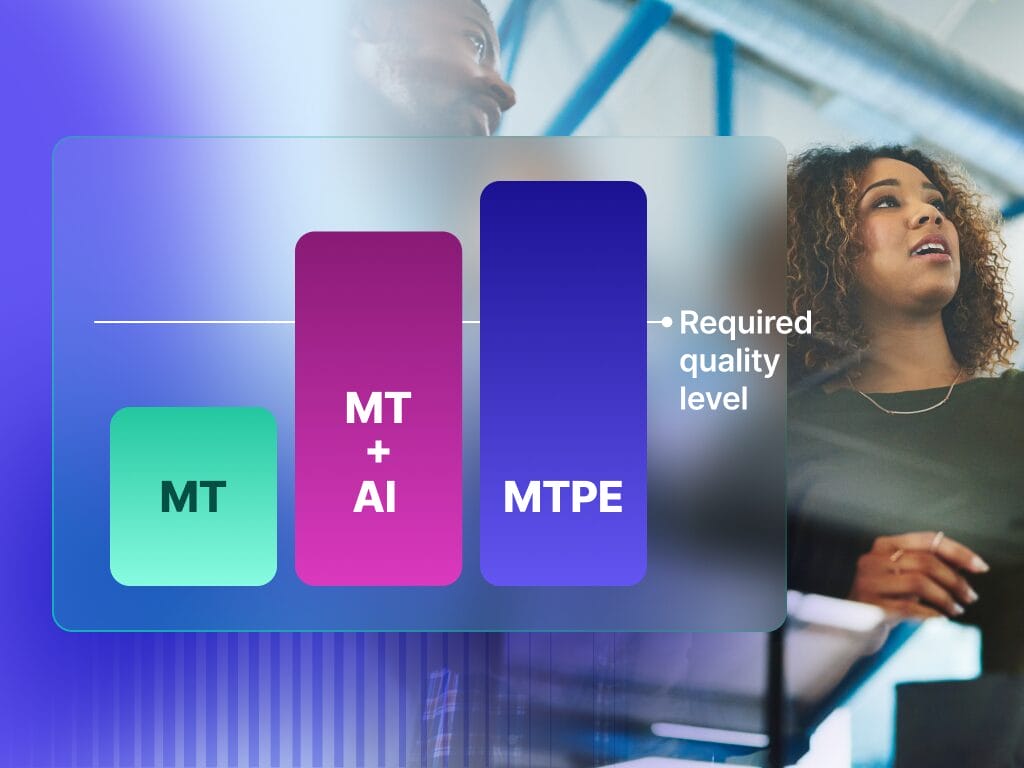

Across industries and use cases, effective AI evaluations consistently return to three interdependent dimensions: time, cost, and quality. What matters is not optimizing any single metric, but understanding how they interact.

Time is about more than raw speed. It includes turnaround expectations, latency in near-real-time scenarios, and the ability to scale output without bottlenecks. Faster delivery can unlock new markets and content strategies, but only if it does not introduce unacceptable risk elsewhere.

Cost is often the initial driver for AI adoption, but it is also the easiest metric to misinterpret. Cost per word and total spend matter, but so does cost predictability and efficiency relative to human effort. Savings that require constant rework or downstream correction rarely hold up under scrutiny.

Quality is the most complex dimension and the most misunderstood. It goes beyond fluency and grammatical correctness to include stylistic alignment, functional accuracy, terminology adherence, formatting integrity, and risk severity. Quality expectations vary widely depending on content type. Getting the message across may be sufficient in some cases. In others, a single error can have legal, regulatory, or reputational consequences.

Optimizing one dimension inevitably impacts the others. AI evaluation is therefore about making trade-offs explicit rather than discovering them too late in production.

Why multilingual content raises the stakes

AI performance is inherently uneven. Results vary across language pairs, domains, and content types. Strong performance in English marketing copy does not guarantee acceptable output in regulated documentation or less-resourced languages.

Evaluating AI on narrow or idealized samples creates false confidence. The only meaningful signal comes from representative production content that reflects the languages, domains, and risk profiles the system will actually touch.

Not all content carries the same tolerance for error. Low-impact material can absorb minor imperfections. High-visibility or regulated content cannot. Treating all content equally in evaluation ignores these realities and undermines trust in automation.

From scoring systems to confident decisions

The goal of AI evaluation is not to crown a single winner or generate impressive averages. It is to understand where automation is safe, where it needs guardrails, and where human expertise remains essential.

This is where quality visibility becomes critical. Segment-level and job-level quality signals make it possible to move beyond blanket automation decisions and toward more nuanced workflows. Instead of asking whether AI is good or bad, teams can ask where it performs strongly, where it struggles, and how those differences should shape routing, review, and investment decisions.

This type of visibility also enables smarter collaboration between humans and AI, focusing human effort where it adds the most value rather than applying it uniformly across all content.

A more practical approach to AI evaluation

To explore this challenge in depth, we recently published a comprehensive guide on evaluating AI for multilingual content. It outlines a structured methodology for defining success criteria, building representative evaluation datasets, applying meaningful metrics, and interpreting results through the lens of real business risk.

The framework draws on the same quality measurement principles that underpin Phrase’s broader approach to automation and quality management, including work on segment-level quality scoring and targeted human intervention.

For teams looking to move beyond intuition and experimentation, the guide offers a practical foundation for making AI decisions that scale responsibly, align with stakeholder expectations, and deliver real value to customers.