In a cloud-powered, borderless landscape, localization has become an infrastructure-layer capability, essential for compliance clarity, content risk mitigation, and reliable global delivery.

Over the past decade, cloud platforms became the backbone of digital transformation. But the 2020s have pushed infrastructure further: hybrid and multi-cloud models are now the default.

Gartner forecasts that 90% of enterprises will adopt hybrid cloud by 2027, while Forrester reports edge-cloud adoption is growing by more than 30% year over year. At the same time, 5G and on-device AI inference are enabling low-latency, personalized digital experiences while also fragmenting compliance and content delivery.

Hybrid approaches bring real advantages, including vendor flexibility, better latency, support for data residency and compliance, and stronger resilience. They allow companies to place workloads and data closer to users, reduce network congestion, and respond faster when connectivity is unreliable or highly regulated.

But they also create complexity. Organizations now juggle public clouds, on-premise architecture, and edge nodes, each with its own rules, risks, and regulatory implications.

In this environment, localization is no longer a surface-level UI task. It has become an infrastructure-layer requirement. It’s the connective tissue that ensures compliant, regionally accurate, and trustworthy information flows through increasingly distributed systems. Without it, global scale brings exposure rather than opportunity.

The rise of hybrid cloud, edge AI and 5G

Edge computing brings processing closer to where data is generated, cutting latency and enabling real-time action, from surgical guidance to autonomous vehicles and industrial automation. Keeping sensitive data local also helps reduce compliance and privacy risk.

Content delivery networks pioneered the model of placing data near users; today, edge AI and IoT push it further, running inference on devices and nodes connected by 5G for ultra-low-latency, high-priority data flows.

As we’ve already mentioned, this shift is accelerating fast. MarketsandMarkets projects the edge AI market to expand from $2.4 billion in 2025 to over $8.8 billion by 2028. These trends reflect how companies are building distributed intelligence; combining public cloud, on-premises infrastructure, and localized edge nodes to power always-on, personalized services.

But distributing intelligence also distributes regulatory exposure. Each edge node may handle user data, system prompts, and compliance-critical messages differently. AI models running on devices can generate or deliver content that must meet local language, tone, and legal standards.

Without infrastructure-layer localization ensuring regionally accurate, legally clear content at the point of delivery, companies risk fragmented user experiences, regulatory fines, and delays to market launches.

Several SaaS providers have already experienced rollout delays when consent flows, security notices, or onboarding content were not localized for new markets. As AI-driven systems expand across borders and data residency rules tighten, ensuring compliance clarity and content risk mitigation at the infrastructure level is becoming a baseline requirement for global scale.

The pressure point: why infrastructure needs localization

Hybrid cloud and edge strategies promise flexibility and speed, but they also create fragmentation. Organizations juggle multiple public clouds, on-premises systems, and distributed teams, making it harder to unify policies, security models, and user communications.

Managing this edge-to-cloud continuum is now one of the top GenAI challenges, with Gartner naming data synchronization across hybrid environments as a near-term barrier to scale.

Compliance and security are the sharpest pressure points. As data flows across jurisdictions, enterprises must meet local residency rules and privacy regulations while protecting against leaks and misconfigurations. Cloudflare notes that visibility of risk drops sharply when workloads are split across providers; the shared responsibility model can leave gaps if policies and alerts aren’t adapted for each region.

This is where infrastructure-layer localization becomes critical. It ensures that onboarding flows, consent language, system prompts, and security notifications are legally sound and regionally accurate before deployment. Without this, regulatory exposure and user confusion escalate quickly.

Consider one recent SaaS expansion into India: launch was paused for six weeks after regulators flagged English-only consent copy under the DPDP Act and questioned unlocalized breach alerts.

By integrating automated, localized compliance content into its CI/CD pipeline, the company relaunched within two weeks and cut legal review cycles by 40 percent.

At global scale, treating localization as a surface-level UI task creates content risk. Building compliance clarity and content risk mitigation into infrastructure is now essential for faster, safer market entry.

Quantifying the compliance challenge

The scope of regulatory complexity keeps expanding. Enterprise Strategy Group reports that 89 percent of organizations say cloud-native applications require distinct security and compliance policies compared with traditional systems. Since the GDPR triggered a wave of global privacy reforms, more than 30 countries have introduced or updated data protection laws, including India’s Digital Personal Data Protection Act and state or industry-specific rules such as CCPA and HIPAA in the United States.

For global tech providers, this creates a moving target. Legal frameworks differ by region, and failure to adapt core information (onboarding and support flows, consent and terms of service, security alerts and incident notices, developer and user documentation) can lead to fines, rollout delays, and lost trust. Infrastructure-layer localization provides the compliance clarity needed to keep these critical assets accurate, auditable, and synchronized with deployment.

To scale safely and quickly, technology leaders need systematic ways to embed this localization into development and deployment pipelines.

Strategies for achieving scalable, localized cloud experiences

Edge-aware AI and content delivery

Edge AI enables real-time decisions and hyper-personalized experiences, but its impact depends on localized content and context. In healthcare, wearable devices and diagnostic tools run on-device algorithms to deliver instant insights while protecting sensitive data. In retail, augmented reality try-ons and AI-driven recommendations adapt to each user on the fly while keeping private or regulated information on device.

These interactions must use pre-localized prompts, system copy, and feedback to avoid confusion or non-compliance. Without this, devices can deliver misleading instructions or legally ambiguous consent messaging, exposing companies to both regulatory and reputational risk.

Executive takeaway: Personalization without infrastructure-layer localization increases content risk, slows approvals, and erodes user trust.

How content execution makes or breaks global SaaS growth: Reduce friction, increase agility and support international revenue

The fastest-moving SaaS companies succeed not because they build more, but because they deliver better. Learn how automation removes bottlenecks, reduces delays, and keeps experiences consistent in every market.

Localized CI/CD pipelines

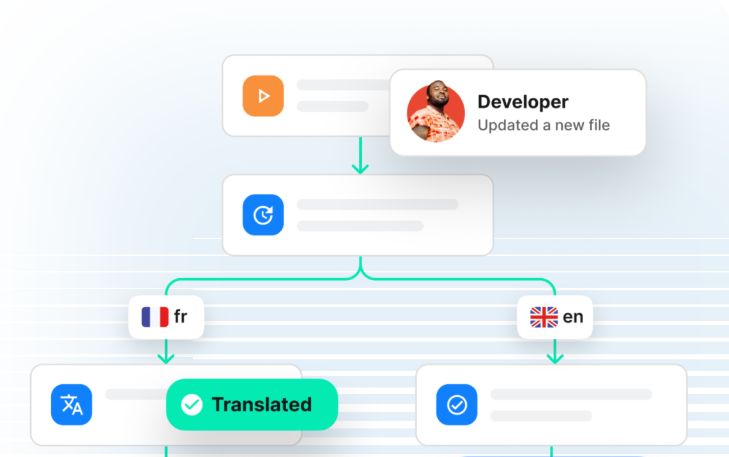

Modern DevOps pipelines ship code to production multiple times a day. For global products, content must follow the same path or risk becoming a release blocker. Without integrated localization, translation and review can slow builds and delay compliance approvals.

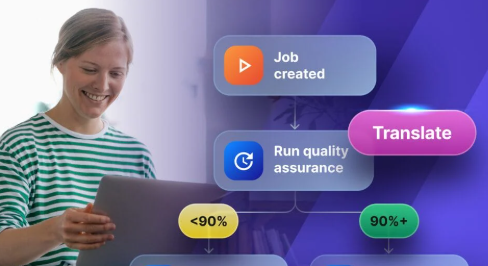

Many teams now adopt “localization as code” or “composable localization”: treating translatable assets like source code with version control, automation, repeatability, and observability. When content is published in a CMS or codebase, webhooks automatically extract localizable fields, send them for translation, and sync updates back into the system through APIs.

For example, a SaaS team deploying new onboarding flows across regions can use GitHub and Phrase integration to trigger translation as part of every build, reducing errors and removing manual bottlenecks.

Key takeaway: Treat localization as code to eliminate release friction, maintain auditability, and accelerate safe global launches.

Compliance through cloud-smart localization

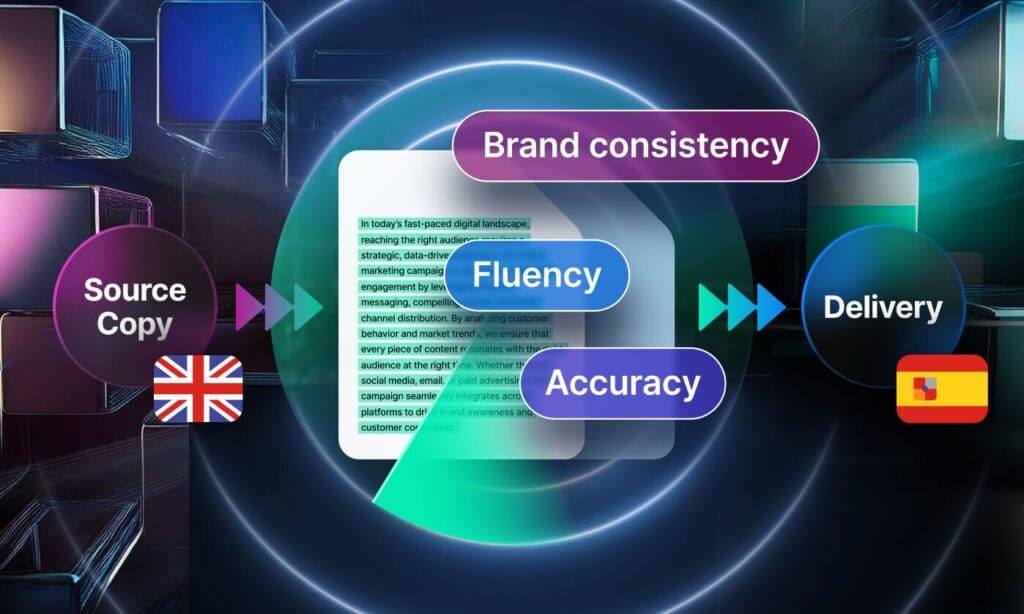

Cloud-smart strategies move away from putting everything in the public cloud and instead place data and workloads where they make the most sense; in the cloud, on premises, or at the edge. But this flexibility creates compliance complexity: legal requirements, data residency rules, and privacy obligations can differ by market.

Compliance-focused content such as terms of service, privacy notices, security policies, and onboarding guides must be legally accurate, culturally appropriate, and easy for regulators and users to understand.

Key takeaway: Cloud-smart infrastructure requires compliance clarity at every regional touchpoint. Localizing legal and security content early reduces regulatory exposure, builds trust, and accelerates market entry.

No-code orchestration for global rollouts

No-code orchestration platforms let content and legal teams automate updates without waiting on developer cycles. In localization, this control is critical for keeping global launches compliant and on schedule.

Tools such as Phrase Orchestrator allow teams to create automation workflows that update product copy, support documentation, and legal terms when requirements change. Approvals and alerts are built in, so compliance-critical content stays synchronized with deployments.

Executive takeaway: No-code orchestration shortens compliance lag, strengthens governance, and speeds safe global rollouts.

Localization as a risk-proofing layer

Incidents and regulatory checks demand clear, immediate communication, but global teams often scramble when critical content isn’t pre-localized. Breach notifications, security alerts, and compliance updates can stall if they require ad hoc translation after an event.

By embedding localization into CI/CD workflows and content governance, companies ensure that high-risk messages and documentation are ready before they’re needed. For example, a cloud platform facing a regional data breach distributed pre-localized security alerts and regulatory disclosures within hours, protecting user trust and avoiding penalties.

Key takeaway: Infrastructure-layer localization is a core risk mitigation tool. It protects trust, speeds incident response, and keeps global operations resilient under pressure.

Onboard tools: Phrase product integration

Localization is often seen as translation alone, but Phrase goes deeper, embedding compliance clarity, content risk mitigation, and automation into the infrastructure stack.

a single source of truth for digital product and system copy; integrates directly with design and engineering workflows so localized UI and microinteractions ship with code.

a no-code automation layer that connects with developer tools, CMS, and CRM systems to trigger, approve, and audit localization updates alongside deployments.

Phrase NextGen MT

AI refinement that ensures natural flow, accurate terminology, and cohesive style, reducing post-edit effort and strengthening compliance review.

extensible integrations with CI/CD pipelines and cloud-native platforms ensure localization is always in sync with product delivery.

Cloud-level impact: key takeaways for tech leaders

- Scale without localization is exposure. Infrastructure-layer localization delivers compliance clarity, mitigates content risk, and protects user trust as you expand globally.

- Real-time, regionally relevant delivery is now baseline. Localized prompts, policies, and system copy must ship with every build to avoid delays and regulatory friction.

- Phrase operationalizes this shift. Integrated pipelines, orchestration, and AI refinement embed localization into how you operate as well as how you communicate.

Hybrid cloud approaches have emerged as a sophisticated way to scale businesses globally, allowing for both powerful flexibility and the privacy and low latency of edge where it is needed.

Whichever route enterprises take with hybrid cloud, scale creates risk of exposure. Localization is a major mitigator of this risk, helping companies adhere to local regulations and laws, and keep end users informed about access and security.

Choosing the right localization technology to integrate with cloud architecture will play a vital role in delivering compliant, culturally relevant and contextual content for any tech or SaaS business.