DNA offers both a blueprint and a mechanism for growth and change in living organisms.

In a way the Phrase API specification has become the DNA of our API clients. It contains the definitions of all objects and interfaces.

From this specification we generated our clients for various platforms using code-generation, read on to learn how we did it.

If you are trying to build your API before designing it, you are likely to create a substantial amount of work during the development cycle due to poorly integrated designs, drift and errors. You will define the API in your code, instead of coding to its definition.

🤿 Go deeper » Check out our top 10 design tips for APIs to get the ball rolling.

In the rush of building APIs we also tend to forget about customers needs. Using a specification driven development approach instead, allowed us to include actual users in the design cycle and ensures the API remains uniform across the full interface. It helps keeping resources and methods standardized, saving time, energy and money in the long term.

While the mechanisms of a specification driven development aren’t new (there are even tools out there to handle most of this). There were a lot of learnings while building the second version of Phrase’s API based on an initial API specification.

We wrote the API documentation first, and as a static site generator was used, the page already included descriptions and titles in YAML-format.

We simply added our specification of the actions for each resource, including inputs and outputs. These files for our documentation were then reused in OpenAPI to generate libraries and API clients for the Phrase API in various programming languages.

The sections to follow will give more insight into the different aspects of creating a restful API, using a specification driven development approach.

Specification driven documentation

Let’s first briefly dive into the specifics of the term “RESTful API”, to set a common ground for understanding what documentation must deliver.

The Phrase API allows the client to request actions like list, show, add, delete or update be performed on resources by the server. In the Phrase context, for example, this could be the request “update the project X’s name to be Y”. The documentation must then specify which resources exist and what actions are allowed on them.

Depending on the action, the client must send data with the request or the server delivers content as part of a response. It must therefore be specified which data is accepted by the resources’ actions and what the responses look like.

In Phrase all messages are encoded in JSON and the “update project” example given before would accept the input

{

"name": "Y"

}

and yields the response

{

"id": "X",

"name": "Y",

"created_at": "2015-01-28T09:52:53Z",

"updated_at": "2015-01-28T09:52:53Z",

"shares_translation_memory": true

}

As specified in the documentation.

There is a high level protocol that describes API specific aspects of the communication between client and server such as authentication, pagination or caching. Those are valid for all messages sent and must be described only once, for example in the API version specific version index page.

Using a static site generator is a convenience for creating this kind of documentation. We decided to use nanoc for Phrase (and other projects), as it offers a lot of flexibility and is used for large projects, for example the github developer documentation.

In nanoc every page has a YAML header that contains information on the page’s content and layout. Additionally the header can be used to specify data that is used by the layout to generate dynamic content.

The following example is a glimpse into “projects” page of the Phrase documentation. It shows the page parameters, like title, headlineand layout, and the data used for the layout, like resource_name and the actions map.

title: Projects

headline: Projects

layout: api_resource_v2

resource_name: project

actions:

update:

title: Update a project

verb: PATCH

url_path: /v2/projects/:id

description: Update an existing project.

params:

-

name: "name"

description: 'Name of the project'

type: string

optional: false

example: "new project name"

response:

status: 200

resource: project

--

The response part of the update action references a type defined in a central location, a separate YAML file parsed initially when generating the static pages.

project:

spec:

id: string

name: string

created_at: datetime

updated_at: datetime

shares_translation_memory: boolean

example:

id: "23"

name: "project name"

created_at: "2015-01-28T09:52:53Z"

updated_at: "2015-01-28T09:52:53Z"

shares_translation_memory: true

At this point it is quite clear that we didn’t write documentation itself in the beginning but utilized the static site generator to generate documentation from a specification given with the page headers. Admittedly, this is a very pedantic view on what happened, but it makes obvious, that as documentation can be generated, code for libraries and tools using the API can be generated, too.

Generating code

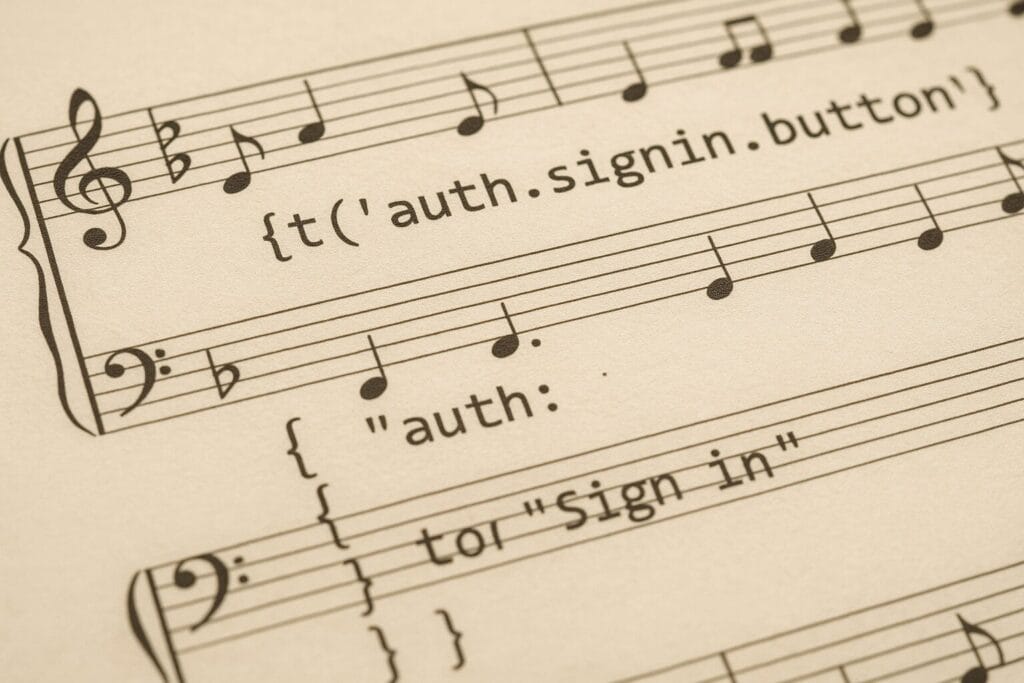

The goal is to have client libraries for different programming languages, that provide an idiomatic interface to the services provided by an API.

Every library does a very general thing for each action on each resource: send out requests to the API and properly handle the responses. Therefore it should be easy to generate code.

For the library to be idiomatic for the target language, the specification must be modified to fit that languages best practices and standards, for example types casing is handled differently across languages: lower vs. upper case, CamelCase vs. snake_case, etc.

A code generator could be designed like the following: in a first phase the specification, used to generate the documentation, is parsed into internal structures. Those are given to a set of generators that will do the actual code generation for the different languages.

Code generation is best done using a templating mechanism like Ruby’s erb or ego for go. This is easier to handle in the long run, than a printf based solution, as the generated code takes precedence over the generating code and not the other way round.

The rendered templates are then saved into a target directory, that could contain the basic glue code or other required static code. For a Ruby gem this could be the gem specification and all code for handling requests, authentication and pagination.

Handling of generated code

The resulting code is best checked into a SCM like git or mercurial so that changes are easily reviewable by creating a diff against the previous version. This requires the generated code to be sorted in some way, e.g. alphabetically, so that the order of fragments is deterministic and the delta algorithm can work properly.

The question how to partition repositories is not that easy to answer. We decided on a single repository per target library or tool respectively. But this is a matter of taste (and may be subject to change over time). The benefit of using different repositories is that users have dedicated locations for documentation, binary releases, feature requests and bug reports (github provides a lot of convenience for this setup).

There are two types of modifications that should trigger a new deployment of the generated code and tools: if the generator code changes or if the documentation changes. You should always keep in mind that breaking the API is not an option. Any changes to the interface would require a new major version, only enhancements are possible to current version, i.e. additions to the existing interface.

Conclusion

By crafting a concise specification, working closely with the development team and potential API users, we have created a blueprint of how our API should work, describing the application’s interactions in a pragmatic way.

Having the specification written first, provided a solid foundation that we could build our API on and ensured that our API remains uniform across the full interface. It helped keeping resources and methods standardized, as well as easily implemented by our developers.

The benefit is that this creates a tight loop, where you can be sure that documentation, libraries and tools will properly work with your API and any of these artefacts will benefit from bugs fixed and enhancements made. Moreover, the specification driven development approach helped to reduce discrepancies in the consistency of the application.

Going further and accepting that the specification was written first, it is a nice side effect, that the specification can be instantaneously be rendered to documentation in a more pleasing format, which helps writing the initial specification.

Tools like RAML take the specification driven approach one step further and provide a generic RESTful API modelling language. However, having come to the solution all by ourselves shows even doing this yourself is worth it for the gained experience.

🤿 Go deeper » Curious about the Phrase API? Learn how to use it in your app.

Want to learn more?

Be sure to subscribe and receive all updates from the Phrase blog straight to your inbox. You’ll receive localization best practices, about cultural aspects of breaking into new markets, guides and tutorials for optimizing software translation and other industry insights and information. Don’t miss out!