With more and more machine translation (MT) engines on the market providing better results than ever before, companies on track for global growth might find it challenging to identify which one best suits their needs.

Is manually selecting an MT engine enough, or is there a more efficient way to do so? Finally, is it possible to combine different evaluation methods? This guide will walk you through different approaches to assessing which MT engine may provide the best results for your MT project.

The challenge of choosing the right MT engine

Machine translation has grown into an efficient and affordable way of translating text from one language to another. MT allows faster translation turnaround times and improves efficiency when dealing with large amounts of text to translate, and eventually reduces the overall cost of a project.

Nevertheless, with so many machine translation tools on the market to choose from, how do you choose an MT engine that will best suit the needs of your business? Going for the most renowned or most easily available one may not be the best option because they aren’t all equal: Some will work great for some language pairs, but less so for others; some will be very efficient in one domain or content type, and less so in others. This is constantly evolving as engines are continually getting trained and improving.

For localization managers or business executives, having to choose between all the available MT engines can quickly become a challenge if they lack insight into which one has the features that may best suit their project.

Choosing the wrong MT engine could lead to poor results, higher costs, and potentially even embarrassing mistakes—so let’s review best practices for choosing the right MT engine for your needs.

Categorize your translation material

Keeping in mind that different MT engines will offer different levels of performance depending on what needs to be translated, the first step is to carefully consider the content you want to have translated.

This division should be according to the specifics of each content asset and the level of quality you want to achieve for each:

- Content type: The text genre with its specific vocabulary, tone of voice, etc., such as legal documentation (e.g., warrants, registrations, certification), technical documentation (e.g., user manuals), marketing content (blog posts, email templates, etc.), user interface (UI) elements (e.g., buttons), and so on.

- Language pairs: The combination of languages you need for translating your text—certain MT engines work best with certain language pairs.

- Time and budget: The amount of time and money you can spend will also have an impact on how you define your approach to choosing the best MT engine.

This will give you a clear picture of what you need before you start the selection process. Below are a number of key elements to take into account in the selection process.

Best practices in MT engine assessment

Assessing which MT engine will work best on your project can be a time-consuming task, but it’s essential to obtain satisfying results.

To get started, follow these two steps when assessing MT engines:

- Collect feedback from your translation teams working on projects.

- Compare your translators’ assessment with insights and research by industry thought leaders and companies focused on machine translation innovation.

If you work with in-house translators or have a fixed team of external linguists, you can ask them to evaluate the translations in a blind test and determine which one provides the best basis for post-editing. After all, it’s the translators who need to work with the machine-translated content, so adopting their preferred provider can save time for everyone.

In parallel, you can do research and look at studies or reports published by recognized industry experts and compare their insights with your translators’ evaluation. For one, Phrase has been focused on MT research for many years now and regularly publishes key findings on MT engine performance in its quarterly Machine Translation Report.

The MT Report analyzes over 50M unique data segments to answer some fundamental questions about machine translation—such as how MT performs for different language combinations and how much MT performance has (or hasn’t) improved over the last quarter.

Using automated scoring methods

The main purpose of automated machine translation scoring methods and tools is to calculate numerical scores that will characterize the “quality” or performance level of a particular machine translation system.

The results of automated machine translation evaluations should match (or correlate with) human intuitive judgments about certain aspects of translation quality, or with certain characteristics of the usage scenario of the translated text.

There are two main methods for automatically evaluating MT output quality:

- Reference proximity, using BLEU (BiLingual Evaluation Understudy), TER (Translation Error Rate), or HTER (Human-Translation Error Rate) families of metrics.

- Performance-based methods, which have analogs in human evaluation, aim to assess how well someone can accomplish a task based on a degraded MT output.

An early example of this concept involved the use of an MT system to translate flying instruction manuals: The system’s performance was judged by the number of pilots who successfully flew flight simulators.

Using a fully automated assessment

Modern, cloud-based translation management systems come with integrated functionality for auto-selecting the best MT engine for every project. For example, Phrase’s machine translation management feature set offers MT autoselect.

Its AI-powered algorithm automatically chooses the best-performing MT engine for each of your projects based on content type and language pair. Additionally, with the MT profiles feature, you can also group engines into profiles and apply them to specific projects. MT autoselect will then identify the optimal engine from your selection for the best possible output.

Further considerations for MT engine assessment

In addition to the more technical requirements for selecting the best MT engine, there are some other aspects that can have an impact on your choice. Let’s review the most important ones.

Should you use a generic or customized MT engine?

Generic or “general-purpose” MT engines such as Google Translate, Microsoft Translate, and Amazon Translate are not trained on data for a particular domain or topic. Therefore, they’re ideal for general translations. They can often give you a good idea of a text in another language, but they could struggle with some domain-specific terminology.

Custom MT engines, on the other hand, are trained on data from specific domains or niches. The result is a more accurate MT output for that kind of content. You can achieve the same or better output from a human translator, but they’d need to be able to read a large amount of already translated target data related to the domain. Human translation, therefore, is only an option when you have a translation memory above a certain number of words.

A custom MT engine is also slightly more expensive, requires more management, and periodic maintenance for best results—it’s great if you translate a lot of the same kind of content.

Reviewing MT vendor pricing

While machine translation is generally significantly cheaper than human translation, it still involves costs, and this has to be carefully taken into account when selecting an MT engine. Even if, in general, pricing is comparable, some specific differences in prices and pricing models can have an impact on your project.

For some examples of pricing models, see Google Translate’s ”pay-as-you-go model” or DeepL Pro, which comes with a monthly flat fee.

Spend some time reviewing the different pricing approaches of the MT engine you’re considering, and define which is the most appropriate for you.

Checking legal requirements

There may be some legal requirements regarding the use of MT, and this can also have an impact on which MT engine to use.

You should verify whether any limitations exist regarding data localization, storage, and transfer in your project, for example in the context of the GDPR rules.

Specific data-handling policies might also be in place, where confidentiality is to be guaranteed—in fact, some companies with very strict data handling will refuse MT altogether, from a lack of knowledge about the technology and the resulting fear that their data might be exposed.

Make sure that the translation agency you’re working with offers secure uploading and data handling. If so, you can rest assured that the combination of proper use and the choice of the right MT engine means no data leaks.

Another area where relying on machine translation can be problematic is legal translation, when court requirements might play a role. In most instances, translations have to be certified, which will not be possible with MT if there is no certified translator involved in, at least, post-editing the MT output.

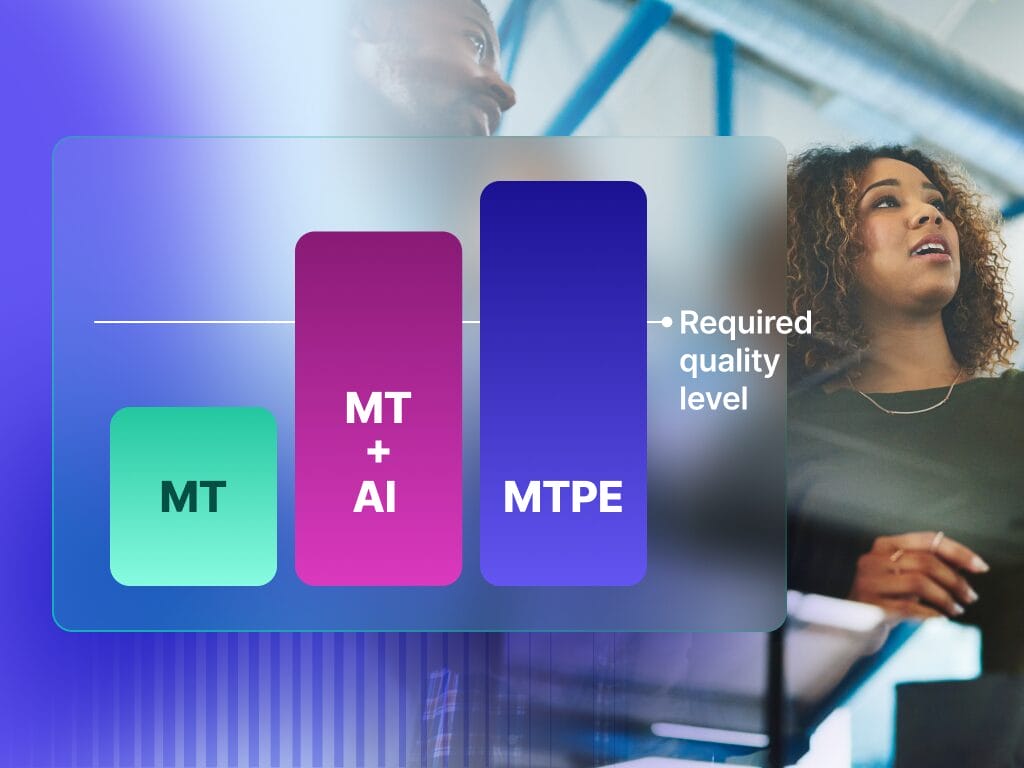

Using raw machine translation or post-editing

In most instances, machine translation post-editing will be necessary after you process the content through the MT engine. Going with an LSP that also provides post-editing will smooth the process and guarantee consistency throughout the project. It’s also important that your MT provider can integrate with a TMS—however, the most popular MT engines today offer this possibility.

Evaluating the additional capabilities of each MT engine

It’s also worth spending some time investigating the specific features that the MT engines you’re considering have to offer.

For example :

- Glossary support: Glossaries, sometimes referred to as custom terminology, custom vocabulary, or dictionaries, are a collection of words and phrases with a preferred machine translation. They function similarly to term bases but are used by MT engines instead of linguists.

- Formal/informal feature (for example, in DeepL): It allows you to choose between a formal and informal tone of voice for your translation. In particular, the feature determines the pronouns and related words used in your translation, etc.

The best MT engine will depend on the project at hand

As we’ve seen, there are many different aspects to take into account when choosing the optimal MT engine for your project.

First of all, make sure you have sorted all the items of your translation project according to their requirements, and work from there. While manually assessing which MT engine will work best may look simple, it will usually give less accurate results—and that may lead you to make the wrong choice, especially when considering larger translation projects. Whenever possible, choose the automated approach, it will likely fit your project better.

Here at Phrase, we’ll be more than happy to help you along the way.