The adoption rate of machine translation (MT) is increasing, but questions still swirl around integrating MT into localization workflows:

- How do you ensure efficiency gains without sacrificing quality?

- How can you work MT into an existing localization workflow?

- How do you get all stakeholders on board?

- What type of content is most suitable for MT?

- Which language pairs to focus on when using MT?

We’ve put together a group of experts to tackle these MT integration questions—and more:

- Adam LaMontagne, Machine Translation Manager at RWS

- Paula Manzur, Machine Translation Specialist at Vistatec

- Jordi Macias, VP, Operations at Lionbridge

- Elaine O’Curran, Senior MT Program Manager on the AI Innovation Team at Welocalize

- Lamis Mhedhbi, Machine Translation Team Lead at Acolad

With more than 80 years of collective experience in the localization industry, our experts have the know-how that many companies need to successfully integrate MT into localization workflows.

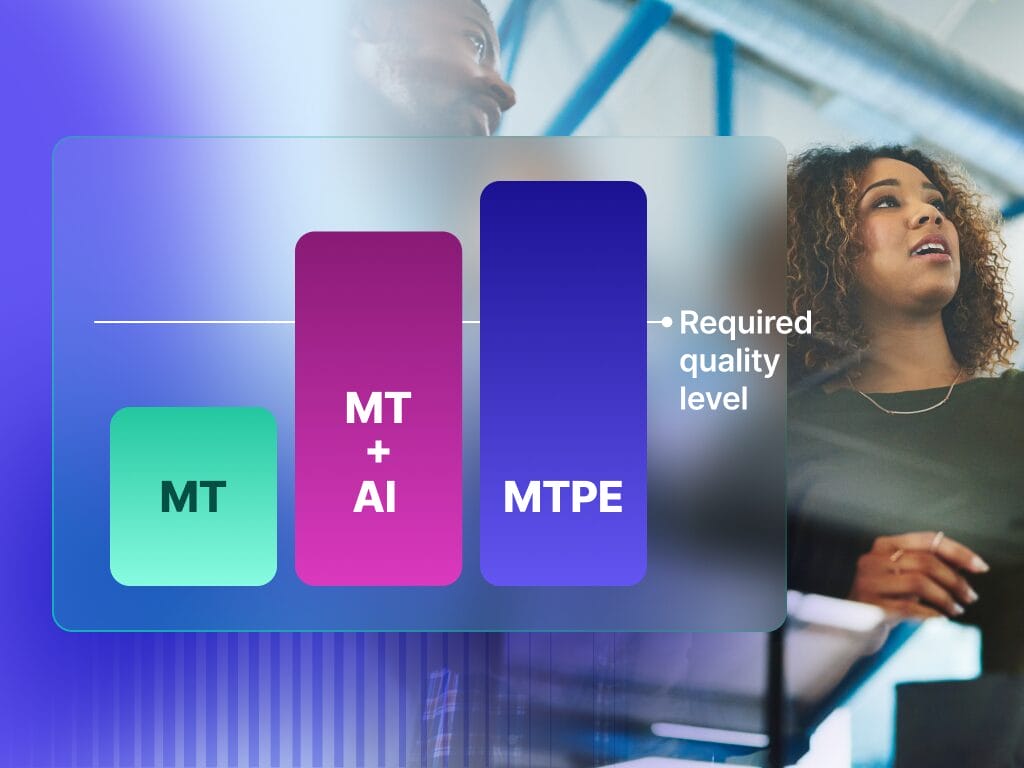

Always understand quality expectations

Machine translation is not one-size-fits-all. You can’t throw all of your content in and expect the quality of the output to meet all of your needs. Before starting with MT, you need to know exactly what outcome you require for the different content types you wish to translate.

Setting quality expectations from the get-go will help not only in the MT evaluation process, but it will also help you measure the success of your MT program—while keeping costs, quality, timelines under control. You’ll need to communicate these expectations clearly with your MT evaluators and linguists. This will help reduce bias and prevent preferential or unnecessary changes to the MT output which may increase the post-edit distance and skew efficiency metrics.

Pro tip: Don’t deploy machine translation without a content type analysis. Create a matrix of your different types of content and what your expectations are for each. Phrase’s Quality Performance Score feature can also help you understand what quality you can expect by providing quality scores for MT at segment level, reducing the uncertainties related to MT results on new content types or languages.

“While quality expectations can differ from project to project, it’s also important to note that improving MT engine quality could affect your overall strategy in the future. Light post-editing (LPE), i.e. raw MT that is only modified where absolutely necessary to ensure the output is legible and conveys the meaning of the source, may no longer make sense. The quality of MT is going up, and on the other hand, the cost of doing machine translation post-editing is going down. The space in which you can play is becoming narrower and narrower,” says Jordi. Shifting the LPE work from professional translators to simply native language reviewers looking for critical errors may replace LPE.

An MT deployment checklist is essential

Besides paying attention to our MT integration checklist, the experts highlight some additional dos and don’ts:

- Do: When evaluating machine translation tools, develop your testing to align with your customer’s needs. You can spend a lot of time testing something the end user cares little about.

- Do: Test a generic engine first. People don’t always consider the volume of source content that will go through MT. When you’re leveraging translation memory for a high volume of words, and only a small percentage of new words are processed by MT, you end up spending far more on customizing and maintaining your engines than actual savings. “Training an MT engine requires an investment, not only for the cost of the MT provider but also the cost of cleaning up and optimizing the training data,” adds Paula.

- Do: Understand the integration. “If you can’t deliver the engine into your processes in an efficient, ergonomic way, then the quality and the suitability of the engine towards your customer’s needs no longer matters,” says Adam. If you invest too much time into MT providers that aren’t supported by your tech stack, you’ll end up spending extra effort trying to make the MT work for you and end up pushing productivity savings to other parts of the supply chain.

- Don’t: Simply upload your glossaries to your engine. Employ the help of a linguist to help you clean your glossaries to get them ready for MT. Glossaries only take you so far when training an engine, with poorly prepared glossaries potentially creating a lot of noise and ruining the output.

MT is not a “set-it-and-forget-it” solution

There is a common misconception that once you’ve set up your MT workflow, you can sit back and relax and marvel at your efficiency gains. This isn’t true. At the start of your MT journey, you’ll spend time and resources on set-up and engine training but it’s easy to forget that MT engines are constantly interacting with new data. The results are going to change over time so you need to understand how your engines are handling the input and how your human editors are interacting with the output. Capturing this data, as well as post-editing data, will help identify possible under-editing and over-editing and enable you to continuously fine-tune your processes, keeping the MT lifecycle healthy.

Pro tip: One way to make it easier to adapt your workflows to new performance data is to use advanced MT management features. For example, Phrase Language AI, our machine translation add-on, can ensure that you always use the best performing engine for your content using its AI-powered MT autoselect feature.

Machine translation ROI is all about use cases

For a long time, MT had a bad reputation resulting from poor quality MT output. However, thanks to neural machine translation, there has been a seismic shift in the perception of MT quality. If you’re trying to get stakeholders on board who aren’t localization-savvy, you may still have to work on removing the fear that MT is synonymous with poor quality.

From there, presenting MT return on investment (ROI) is all about use cases and the particular need of the stakeholder. Whether it’s light post-editing or full post-editing, custom engines or generic, you need to find the best use of the budget and how to get the most out of it. “Essentially, it goes back to data, understanding what your stakeholders’ goals are, and then presenting the best workflow that covers the full spectrum of their needs,” explains Jordi.

Pro tip: Basing your MT ROI on translation volumes is a common faux pas. Machine translation isn’t the only technology that could be contributing towards higher productivity. Translation memories produce the bulk of translation output, and you’ll end up with a smaller, perhaps seemingly insignificant, volume actually translated by MT.

Capture all the data

Data is integral to a successful localization workflow. This is made especially clear as, regardless of the question, data is part of almost every piece of input from our experts. Evaluating an MT engine before implementation? You need to collect data. Want to improve the quality of the MT output? Dig into the data. How do you present ROI to stakeholders? Data will come in handy. When should you retrain your engine? Look at the data.

Qualitative data is also important. “Collect post-editors feedback regarding the engine quality and do this continuously to adapt to meet productivity goals,” says Lamis. Feedback from clients is also vital for improving engine quality.

When asked if you should track MT data for all projects or rather do spot checks, the experts all agree that capturing all the data would be ideal—as long as there is an efficient way of doing it. “You don’t want to add huge overhead by manually downloading files and scoring them, but if you do it automatically, yes, measure everything,” concludes Elaine.

Pro tip: For advanced users, Phrase offers full access to performance data through the Snowflake integration. You can track your editing time, post-editing analysis, LQA results, and on-time delivery. On top of that, we automatically calculate key MT metrics, like BLEU, TER, or chrf3, providing you with multiple ways to measure your performance.